www.my-spot.com > Bayer Patterns and Raw Images

Abstract: Many software designers and imaging experts have tried to develop Bayer Pattern demosiacing algorithms that produce output which more closely resembles the original image or scene. All RAW converters that extract an image from Bayer Pattern sensors (or similar) will have drawbacks of one kind or another. This FAQ addresses some of the more common questions about Bayer Patterns and RAW camera images.

Q: What is a RAW image file?

A: All RAW file consist of two things...

Meta Data: Which is a record of the state of the camera or recording device at the moment the image was taken. This include items such as Date, Time, ISO, Shutter Speed, and a host of other items.

Image Data: which, in most cases, is a the unmodified data exactly as it is output from the A to D convertors (nomaly 12 bit linear). Each 12 bit piece of data is a record for either a Red, Green, or Blue site of the Bayer pattern, Thus the 6MP camera records 1.5M Red, 3M Green, and 1.5M Blue pixels.

Q: What does a RAW converter do?

A: Basically a raw converter (including the generation of an in-camera jpeg) does the following...

- Read Bayer Pattern Data (or raw file)

- Scale Brightness values of each photo site/color based on White Balance values.

- Demosiac (convert) the Bayer pattern into RGB data.

- Load a device (camera) color profile and an output color profile and create a conversion matrix. When each RGB pixel is put through the matrix the RGB values are adjusted for more accurate color. (see more below)

- Post process the data (deal with highlights, sharpness, saturation, noise reduction, etc)

- Save an output file (tiff, jpeg, or dump to a program such as PS).

Q: What sets RAW converter apart?

A: Three things set RAW converters apart when it comes to the actual conversion of the RAW data.

- The ability to extract an RGB image from Bayer Pattern.

- How the converter deals with (clips or reconstructs) "blown highlights".

- How (really "which") color profiles are used for output.

Q: What is the big deal about Bayer Pattern conversion? What makes it so tough?

A: Full color images usually consist of a picture element (pixel) that contains at least three components – Most typically Red, Green and Blue components. Raw images based on Bayer Patterns have only one of the three color components at each pixel location . The challenge has to do with reconstructing the missing two components at each location. Developers face an uphill battle and many tradeoffs in the quest to create the best results. Put simply, when your input starts out with only 33% of the data of your output, there are going to be inaccuracies. The trick is to select the tradeoffs that result in the fewest inaccuracies. The other trick is to do so in a way that has a low computational cost.

Q: What is the best Bayer Pattern conversion algorithm?

A: Simply - There isn't one! Currently the industry favorite is the AHD routine and its derivatives. However the author's ACC* routine does a better job in many cases. Of course, every image has qualities that one routine does better then another. ACC may be better in many, AHD better in others, VNG in yet others, ECW in still others. Noise, amounts of detail and/or saturated color all can have a bearing on which routine is best for a given image. There is NO perfect solution, but, it seems that every month, a new version of an older routine is tweaked to get better results. (See: "What makes it so tough?" above.)

Q: Why are Camera Manufactures so reluctant to publish specifications for their raw file formats?

A: The short answer is “I don’t know”. The long answer is fairly easy to guess at. First, Control! Camera manufactures are often praised for the unique image qualities of their cameras. For example, in the past, Konica-Minolta was often praised for their dSLR’s color rendition and skin tones. The fact is that when it comes to getting these things out of a MRW file (the KM raw file format) there is little the actual camera has to do with it. The truth is the same raw file processed through two different converters will give two very different image and tonal qualities. So let’s say you convert a raw file using the XYZ raw converter and the colors look horrible and the skin tones make everyone look like dead people and you post the images on the internet anyway. Who gets the bad wrap??? The camera manufacturer!

Second, Money! The camera manufactures would much rather have you buy their raw conversion software…

Fortunately, many people actually enjoy decoding the various raw data formats and publishing the results.

Q: When I look very closely at some of my photos, there are red and blue fringes in areas of high contrast. Why?

A: This can be caused by two things. The first is from a kind of lens distortion called Chromatic Aberration. This is when the Red, Green and Blue components of light do not come together in exactly in the same place. It is usually more prominent in the corners of photographs and usually is the result of the design of the camera lens. APO and more expensive lenses with special lens elements (such as SD and ED elements) are far less prone to this kind of problem.

The second cause is the result of the Bayer Pattern routines used to create the output image either in the camera (in the case of in camera jpeg files) or in the raw file image conversion program. Most Bayer Pattern demosiacing routines do not correctly render high contrast areas to deal with this kind of color fringing. It is possible but most developers have not yet discovered the secret.

Q: In some of my photos I see this really wild looking color pattern "effect". What is it?

A: Probably you are seeing something known as Moiré. Theoretically this is caused most often when the subject has more detail than the resolution of the camera (or image), this results in lower frequency harmonics that appear as “waves” in your image . However, this is often also artificially made worse by many Bayer Pattern demosiacing routines. In this case the cause is often related to the same thing that causes color fringing.

Q: I have noticed some artifacts that look like mazes or some crazy Greek wall trim. Why are these in my image?

A: This is a result of Bayer Pattern demosiacing routines trying to make sense of high frequency information. Many, if not most, routines have some kind of edge/detail direction sensing, when these routines hit a section of an image that trick them into “thinking” the direction of some detail is other then the “real” direction, these maze artifacts can result. Sometimes, due to the nature of Bayer Patterns, the data have more then one mathematically valid solution for edge direction and therefore chooses the "wrong" solution.

Q: Why do some edge details in my images have what almost looks like a zipper pattern?

A: This is the result of incorrectly interpreting the color changes that result from alternating Red Green or Blue Green pixels of a Bayer Pattern. One common way of dealing with this is the use of strong edge detection routines. Many routines simply do not deal with this image area's correctly.

Q: Why don’t camera manufactures improve the Auto White Balance routines in their cameras so pictures taken in tungsten light look as good as those taken in daylight?

A: They could, but then the Auto White Balance routines would then run amuck and make candlelight and flames look white as well. Basically there would far more complaints caused by this then there are now for less then perfect whites in tungsten light. Bad things would also happen to pictures with a lot of red or blue as well (such as a golden sunset or a macro shot of a pink rose). Really we should be happy Auto White Balance is somewhat limited.

Q: I have heard that very often there are 1 to 3 extra photographic stops of highlight detail present in RAW files compared to the jpeg image produced by the camera. Why is this extra detail “thrown away” or not used in the jpeg output?

A: One reason for throwing away or clipping this data is because our cameras can capture more levels of brightness then our computer displays and or output devices can reproduce. In order to display all of the brightness/highlight detail available the image must be reduced in contrast. This reduction is often great enough that the resulting images look dim, dull, and lifeless. Some raw conversion programs allow you to recover and use some or all of this data, but the highlights must be compressed in brightness in order for the overall image to look good. If done well, the results can be as natural looking as highlight areas in comparable film images. Another reason has to due with White Balance scaling. This scaling causes the clipping of highlight data to happen at different levels for each color. Many programs that try to recover this data either only recover up to the lowest value clipped or just leave the values clipped resulting in blue or pink overtones in the clipped areas. A few programs actually reconstruct the clipped data using reasonable assumptions and data from the unclipped channels. These programs tend to have the most natural looking results. Still, this extra recovered data must be “compressed” in brightness in order to be displayed on a monitor so it doesn’t look flat, dim, or washed out.

Q: Why is the RAW converter I use so slow?

A: A good raw converter must make many calculations to produce the best “full resolution” results. Usually the best results require the converter to look at a pixel and its surrounding data many many times and this is computationally expensive. However, if a reduced output size (1/2 the size dimension or ¼ the megapixels) is all that is required, as in the case of most images required for websites or emails, then very simple routines which do not require much computing overhead may (should) be used.

Q: What is all the fuss over Color Profiles and Color Management?

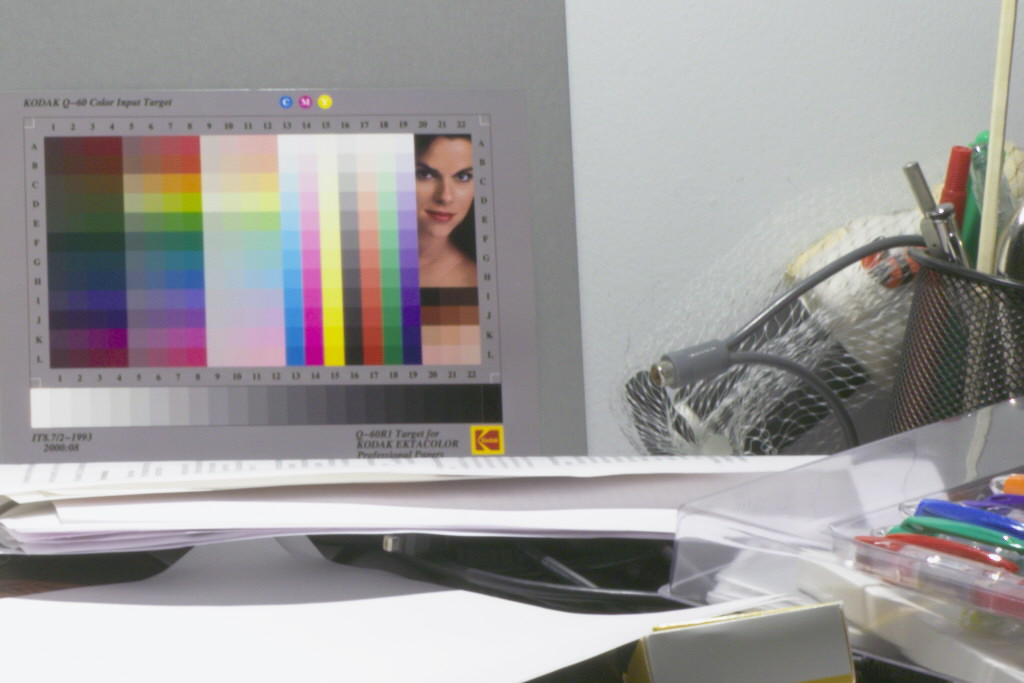

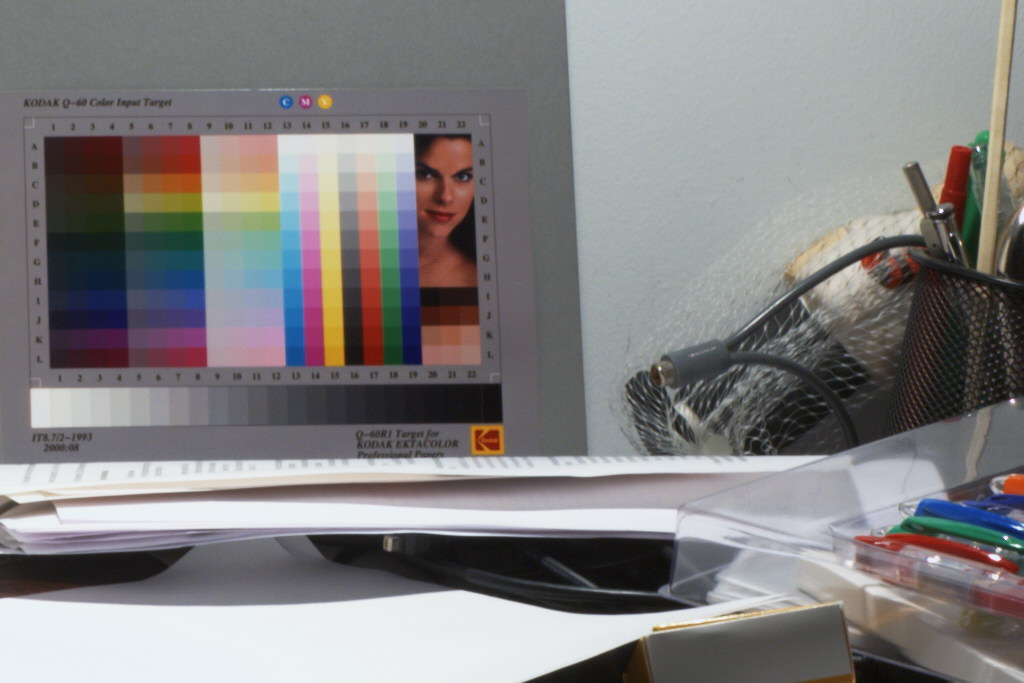

A: TWO things are needed to get accurate color images from a raw data set. One is correct white balance data (stored in the Meta Data or determined manually), the other is TWO color profiles, the camera (device) profile, and an output profile. Most all of the nice comments about how well a camera reproduces colors and skin tones can be attributed to the device color profile NOT the camera. Note the following images (100% crops), esp the skin tones and the yellows. Note the following images (50% crops) esp. the skin tones and the yellows. The top image used a proper color profile conversion/correction while the second image is "straight" from the camera with no color profile correction. Some may like the lower image, but the top image is more accurate.

* The owner of this site wrote the ACC routine. If you would like more information about the ACC routine, please feel free to contact him using the email link below.